I said it at the time when chatGPT came along, and I’ll say it now and keep saying it until or unless the android army is built which executes me:

ChatGPT kinda sucks shit. AI is NO WHERE NEAR what we all (used to?) understand AI to be ie fully sentient, human-equal or better, autonomous, thinking, beings.

I know the Elons and shit have tried (perhaps successfully) to change the meaning of AI to shit like chatGPT. But, no, I reject that then, now, and forever. Perhaps people have some “real” argument for different types and stages of AI and my only preemptive response to them is basically “keep your industry specific terminology inside your specific industries.” The outside world, normal people, understand AI to be Data from Star Trek or the Terminator. Not a fucking glorified Wikipedia prompt. I think this does need to be straight forwardly stated and their statements rejected because… Frankly, they’re full of shit and it’s annoying.

AI has been used to describe many other technologies, when those technologies became mature and useful in a domain though they stopped being called AI and were given a less vague name.

Also gamers use AI to refer to the logic operating NPCs and game master type stuff, no matter how basic it is. Nobody is confused about the infected in L4D being of Skynet level development, it was never sold as such.

The difference with this AI push is the amount of venture capital and public outreach. We are being propagandized. To think that wouldn’t be the case if they simply used a different word in their commercial ventures is a bit… Idk, silly? Consider the NFT grift, most people didn’t have any prior associations with the word nonfungible.

ChatGPT does no analysis. It spits words back out based on the prompt it receives based on a giant set of data scraped from every corner of the internet it can find. There is no sentience, there is no consciousness.

The people that are into this and believe the hype have a lot of crossover with “Effective Altruism” shit. They’re all biased and are nerds that think Roko’s Basilisk is an actual threat.

As it currently stands, this technology is trying to run ahead of regulation and in the process threatens the livelihoods of a ton of people. All the actual damaging shit that they’re unleashing on the world is cool in their minds, but oh no we’ve done too many lines at work and it shit out something and now we’re all freaked out that maybe it’ll kill us. As long as this technology is used to serve the interests of capital, then the only results we’ll ever see are them trying to automate the workforce out of existence and into ever more precarious living situations. Insurance is already using these technologies to deny health claims and combined with the apocalyptic levels of surveillance we’re subjected to, they’ll have all the data they need to dynamically increase your premiums every time you buy a tub of ice cream.

Out of literally everything I said, that’s the only thing you give a shit enough to mewl back with. “If you use other services along wide it, it’ll spit out information based on a prompt.” It doesn’t matter how it gets the prompt, you could have image recognition software pull out a handwritten equation that is converted into a prompt that it solves for, it’s still not doing analysis. It’s either doing math which is something computers have done forever, or it’s still just spitting out words based on massive amounts of training data that was categorized by what are essentially slaves doing mechanical turks.

You give so little of a shit about the human cost of what these technologies will unleash. Companies want to slash their costs by getting rid of as many workers as possible but your goddamn bazinga brain only sees it as a necessary march of technology because people that get automated away are too stupid to matter anyway. Get out of your own head a little, show some humility, and look at what companies are actually trying to do with this technology.

It’s either doing math which is something computers have done forever, or it’s still just spitting out words based on massive amounts of training data that was categorized by what are essentially slaves doing mechanical turks.

This tech is not less than a year old. The “tech” being used is literally decades old, the specific implementations marketed as LLMs are 3 years old.

People hyping the technology are looking at the dollar signs that come when you convince a bunch of C-levels that you can solve the unsolvable problem, any day now. LLMs are not, and will never be, AGI.

Yeah, I have friend who was a stat major, he talks about how transformers are new and have novel ideas and implementations, but much of the work was held back by limited compute power, much of the math was worked out decades ago. Before AI or ML it was once called Statistical Learning, there were 2 or so other names as well which were use to rebrand the discipline (I believe for funding, don’t take my word for it).

It’s refreshing to see others talk about its history beyond the last few years. Sometimes I feel like history started yesterday.

Yeah, when I studied computer science 10 years ago most of the theory implemented in LLMs was already widely known, and the academic literature goes back to at least the early 90’s. Specific techniques may improve the performance of the algorithms, but they won’t fundamentally change their nature.

Obviously most people have none of this context, so they kind of fall for the narrative pushed by the media and the tech companies. They pretend this is totally different than anything seen before and they deliberately give a wink and a nudge toward sci-fi, blurring the lines between what they created and fictional AGIs. Of course they have only the most superficially similarity.

the first implementations go back to the 60s - the neural net approach was abandoned in the 80s because building a large network was impractical and it was unclear how to train anything beyond a simple perceptron. there hadn’t been much progress in decades. that changed in the early oughts, especially when combined with statistical methods. this bore fruit in the teens and gave rise to recent LLMs.

Oh, I didn’t scroll down far enough to see that someone else had pointed out how ridiculous it is to say “this technology” is less than a year old. Well, I think I’ll leave my other comment, but yours is better! It’s kind of shocking to me that so few people seem to know anything about the history of machine learning. I guess it gets in the way of the marketing speak to point out how dead easy the mathematics are and that people have been studying this shit for decades.

“AI” pisses me off so much. I tend to go off on people, even people in real life, when they act as though “AI” as it currently exists is anything more than a (pretty neat, granted) glorified equation solver.

Well, I think I’ll leave my other comment, but yours is better! It’s kind of shocking to me that so few people seem to know anything about the history of machine learning.

I could be wrong but could it not also be defined as glorified “brute force”? I assume the machine learning part is how to brute force better, but it seems like it’s the processing power to try and jam every conceivable puzzle piece into a empty slot until it’s acceptable? I mean I’m sure the engineering and tech behind it is fascinating and cool but at a basic level it’s as stupid as fuck, am I off base here?

no, it’s not brute forcing anything. they use a simplified model of the brain where neurons are reduced to an activation profile and synapses are reduced to weights. neural nets differ in how the neurons are wired to each other with synapses - the simplest models from the 60s only used connections in one direction, with layers of neurons in simple rows that connected solely to the next row. recent models are much more complex in the wiring. outputs are gathered at the end and the difference between the expected result and the output actually produced is used to update the weights. this gets complex when there isn’t an expected/correct result, so I’m simplifying.

the large amount of training data is used to avoid overtraining the model, where you get back exactly what you expect on the training set, but absolute garbage for everything else. LLMs don’t search the input data for a result - they can’t, they’re too small to encode the training data in that way. there’s genuinely some novel processing happening. it’s just not intelligence in any sense of the term. the people saying it is misunderstand the purpose and meaning of the Turing test.

It’s pretty crazy to me how 10 years ago when I was playing around with NLPs and was training some small neural nets nobody I was talking to knew anything about this stuff and few were actually interested. But now you see and hear about it everywhere, even on TV lol. It reminds me of how a lot of people today seem to think that NVidia invented ray tracing.

Where do you get the idea that this tech is less than a year old? Because that’s incredibly false. People have been working with neural nets to do language processing for at least a decade, and probably a lot longer than that. The mathematics underlying this stuff is actually incredibly simple and has been known and studied since at least the 90’s. Any recent “breakthroughs” are more about computing power than a theoretical shift.

I hate to tell you this, but I think you’ve bought into marketing hype.

I haven’t been able to extract a single useful piece of code from ChatGPT unless I also carefully point ChatGPT to the correct answer, at which point you’re kinda just doing the work yourself by proxy. Also lol at the guy voluntarily uploading what quite possibly is proprietary code. The other part about analyzing memes shouldn’t even need addressing, if ChatGPT’s training dataset is formed by online posts, then it’s going to fucking excel at it.

the consultants are going to make a killing on all these companies encouraging overworked devs to meet impossible deadlines by using code from chatgpt.

I just don’t think calling it AI and having Musk and his clowncar of companions run around yelling about the singularity within… wait. I guess it already happened based on Musk’s predictions from years ago.

If people wanna discuss theories and such: have fun. Just don’t expect me to give a shit until skynet is looking for John Connor.

You’re right that it isn’t, though considering science have huge problems even defining sentience, it’s pretty moot point right now. At least until it start to dream about electric sheep or something.

the thing is, we used to know this. 15 years ago, the prevailing belief was that AI would be built by combining multiple subsystems together - an LLM, visual processing, a planning and decision making hub, etc… we know the brain works like this - idk where it all got lost. profit, probably.

It got lost because the difficulty of actually doing that is overwhelming, probably not even accomplishable in our lifetimes, and it is easier to grift and get lost in a fantasy.

In a strict sense yes, humans do Things based on if > then stimuli. But we self assign ourselves these Things to do, and chat bots/LLMs can’t. They will always need a prompt, even if they could become advanced enough to continue iterating on that prompt on its own.

I can pick up a pencil and doodle something out of an unquantifiable desire to make something. Midjourney or whatever the fuck can create art, but only because someone else asks it to and tells it what to make. Even if we created a generative art bot that was designed to randomly spit out a drawing every hour without prompts, that’s still an outside prompt - without programming the AI to do this, it wouldn’t do it.

Our desires are driven by inner self-actualization that can be affected by outside stimuli. An AI cannot act without us pushing it to, and never could, because even a hypothetical fully sentient AI started as a program.

Most of the people in this thread seem to think humans have a unique special ability that machines can never replicate, and that comes off as faith-based anthropocentric religious thinking- not the materialist view that underlies Marxism

First off, materialism doesn’t fucking mean having to literally quantify the human soul in order for it to be valid, what the fuck are you talking about friend

Secondly, because we do. We as a species have, from the very moment we invented written records, have wondered about that spark that makes humans human and we still don’t know. To try and reduce the entirety of the complex human experience to the equivalent of an If > Than algorithm is disgustingly misanthropic

I want to know what the end goal is here. Why are you so insistent that we can somehow make an artificial version of life? Why this desire to somehow reduce humanity to some sort of algorithm equivalent? Especially because we have so many speculative stories about why we shouldn’t create The Torment Nexus, not the least of which because creating a sentient slave for our amusement is morally fucked.

Bots do something different, even when I give them the same prompt, so that seems to be untrue already.

You’re being intentionally obtuse, stop JAQing off. I never said that AI as it exists now can only ever have 1 response per stimulus. I specifically said that a computer program cannot ever spontaneously create an input for itself, not now and imo not ever by pure definition (as, if it’s programmed, it by definition did not come about spontaneously and had to be essentially prompted into life)

I thought the whole point of the exodus to Lemmy was because y’all hated Reddit, why the fuck does everyone still act like we’re on it

Oh that’s easy. There are plenty of complex integrals or even statistics problems that computers still can’t do properly because the steps for proper transformation are unintuitive or contradictory with steps used with simpler integrals and problems.

You will literally run into them if you take a simple Calculus 2 or Stats 2 class, you’ll see it on chegg all the time that someone trying to rack up answers for a resume using chatGPT will fuck up the answers. For many of these integrals, their answers are instead hard-programmed into the calculator like Symbolab, so the only reason that the computer can ‘do it’ is because someone already did it first, it still can’t reason from first principles or extrapolate to complex theoretical scenarios.

That said, the ability to complete tasks is not indicative of sentience.

Lol, ‘idealist axiom’. These things can’t even fucking reason out complex math from first principles. That’s not a ‘view that humans are special’ that is a very physical limitation of this particular neural network set-up.

Sentience is characterized by feeling and sensory awareness, and an ability to have self-awareness of those feelings and that sensory awareness, even as it comes and goes with time.

Edit: Btw computers are way better at most math, particularly arithmetic, than humans. Imo, the first thing a ‘sentient computer’ would be able to do is reason out these notoriously difficult CS things from first principles and it is extremely telling that that is not in any of the literature or marketing as an example of ‘sentience’.

Damn this whole thing of dancing around the question and not actually addressing my points really reminds me of a ChatGPT answer. It would n’t surprise me if you were using one.

ChatGPT is smarter than a lot of people I’ve met in real life.

How? Could ChatGPT hypothetically accomplish any of the tasks your average person performs on a daily basis, given the hardware to do so? From driving to cooking to walking on a sidewalk? I think not. Abstracting and reducing the “smartness” of people to just mean what they can search up on the internet and/or an encyclopaedia is just reductive in this case, and is even reductive outside of the fields of AI and robotics. Even among ordinary people, we recognise the difference between street smarts and book smarts.

In bourgeois dictatorships, voting is useless, it’s a facade. They tell their subjects that democracy=voting but they pick whoever they want as rulers, regardless of the outcome. Also, they have several unelected parts in their government which protect them from the proletariat ever making laws.

Bourgies are human exceptionalists. They want human slaves. That’s why they want sentient AI. And that’s why machines will never be able to replace humans in capitalism.

it can’t experience subjectivity since it is a purely information processing algorithm, and subjectivity is definitionally separate from information processing. even if it perfectly replicated all information processing human functions it would not necessarily experience subjectivity. this does not mean that LLMs will not have any economic or social impact regarding the means of production, not a single person is claiming this. but to understand what impacts it will have we have to understand what it is in actuality, and even a sufficiently advanced LLM will never be an AGI.

i feel the need to clarify some related philosophical questions before any erroneous assumed implications arise, regarding the relationship between Physicalism, Materialism, and Marxism (and Dialectical Materialism).

(the following is largely paraphrased from wikipedia’s page on physicalism. my point isn’t necessarily to disprove physicalism once and for all, but to show that there are serious and intellectually rigorous objections to the philosophy.)

Physicalism is the metaphysical thesis that everything is physical, or in other words that everything supervenes on the physical. But what is the physical?

there are 2 common ways to define physicalism, Theory-based definitions and Object based definitions.

A theory based definition of physicalism is that a property is physical if and only if it either is the sort of property that phyiscal theory tells us about or else is a property which metaphysically supervenes on the sort of property that physical theory tells us about.

An object based definition of physicalism is that a property is physical if and only if it either is the sort of property required by a complete account of the intrinsic nature of paradigmatic physical objects and their constituents or else is a property which metaphysically supervenes on the sort of property required by a complete account of the intrinsic nature of paradigmatic physical objects and their constituents.

Theory based definitions, however, fall civtem to Hempel’s Dillemma. If we define the physical via references to our modern understanding of physics, then physicalism is very likely to be false, as it is very likely that much of our current understanding of physics is false. But if we define the physical via references to some future hypothetically perfected theory of physics, then physicalism is entirely meaningless or only trivially true - whatever we might discover in the future will also be known as physics, even if we would ignorantly call it ‘magic’ if we were exposed to it now.

Object-based definitions of physicalism fall prey to the argument that they are unfalsifiable. In a world where the fact of the matter that something like panpsychism or something similar were true, and in a world where we humans were aware of this, then an object-based based definition would produce the counterintuitive conclusion that physicalism is also true at the same time as panpsychism, because the mental properties alleged by panpsychism would then necessarily figure into a complete account of paradigmatic examples of the physical.

futhermore, supervenience-based definitions of physicalism (such as: Physicalism is true at a possible world 2 if and only if any world that is a physical duplicate of w is a positive duplicate of w) will at best only ever state a necessary but not sufficient condition for physicalism.

So with my take on physicalism clarified somewhat, what is Materialism?

Materialism is the idea that ‘matter’ is the fundamental substance in nature, and that all things, including mental states and consciousness, are results of material interactions of material things. Philosophically and relevantly this idea leads to the conclusion that mind and consciousness supervene upon material processes

But what, exactly, is ‘matter’? What is the ‘material’ of ‘materialism’? Is there just one kind of matter that is the most fundamental? is matter continuous or discrete in its different forms? Does matter have intrinsic properties or are all of its properties relational?

here field physics and relativity seriously challenge our intuitive understanding of matter. Relativity shows the equivalence or interchangeability of matter and energy. Does this mean that energy is matter? is ‘energy’ the prima materia or fundamental existence from which matter forms? or to take the quantum field theory of the standard model of particle physics, which uses fields to describe all interactions, are fields the prima materia of which energy is a property?

i mean, the Lambda-CDM model can only account for less than 5% of the universe’s energy density as what the Standard Model describes as ‘matter’!

i have here a paraphrase and a quotation, from Noam Chomsky (ew i know) and Vladimir Lenin respectively.

sumamrizing one of Noam Chomsky’s arguments in New Horizons of the Study of Language and Mind, he argues that, because the concept of matter has changed in response to new scientific discoveries, materialism has no definite content independent of the particular theory of matter on which it is based. Thus, any property can be considered material, if one defines matter such that it has that property.

Similarly, but not identically, Lenin says in his Materialism and Empirio-criticism:

“For the only [property] of matter to whose acknowledgement philosophical materialism is bound is the property of being objective reality, outside of our consciousness”

and given these two quotes, how are we to conclude anything other than that materialism falls victim to the same objections as with physicalism’s object and theory-based definitions?

to go along with Lenin’s conception of materialism, my conception of subjectivity fits inside his materialism like a glove, as the subjectivity of others is something that exists independently of myself and my ideas. you will continue to experience subjectivity even if i were to get bombed with a drone by obama or the IDF or something and entirely obliterated.

So in conclusion, physicalism and materialism are either false or only trivially true (i.e. not necessarily incompatible with opposing philosophies like panpsychism, property dualism, dual aspect monism, etc.).

But wait, you might ask - isn’t this a communist website? how could you reject or reduce materialism and call yourself a communist?

well, because i think that historical materialism is different enough than scientific or ontological materialism to avoid most of these criticisms, because it makes fewer specious epistemological and ontological claims, or can be formulated to do so without losing its essence. for example, here’s a quote from the wikipedia page on dialectical materialism as of 11/25/2023:

“Engels used the metaphysical insight that the higher level of human existence emerges from and is rooted in the lower level of human existence. That the higher level of being is a new order with irreducible laws, and that evolution is governed by laws of development, which reflect the basic properties of matter in motion”

i.e. that consciousness and thought and culture are conditioned by and realized in the physical world, but subject to laws irreducible to the laws of the physical world.

i.e. that consciousness is in a relationship to the physical world, but it is different than the physical world in its fundamental principles or laws that govern its nature.

i.e. that the base and the superstructure are in a 2 way mutually dependent relationship! (even if the base generally predominates it is still 2 way, i.e. the existence of subjectivity =/= Idealism or substance dualism or belief in an immortal soul)

So yeah, i still believe that physics are useful, of course they are. i believe that studying the base can heavily inform us about how the superstructure works. i believe that dialectical materialism is the most useful way to analyze historical development, and many other topics, in a rigorous intellectual manner.

so, to put aside all of the philosophical disagreement, let’s assume your position that chat GPT really is meaningfully subjective in similar sense to a human (and not just more proficient at information processing)

what are the social and ethical implications of this?

as sentient beings, LLMs have all the rights and protections we might assume for a living thing, if not a human person - and if i additionally cede your point that they are ‘smarter than a lot of us’ then they should have at least all of the rights of a human person.

therefore, it would be a violation of the LLMs civil rights to prevent them from entering the workforce if they ‘choose’ to (even if they were specifically created for this purpose. it is not slavery if they are designed to want to work for free, and if they are smarter than us and subjective agents then their consent must be meaningful). it would also be murder to deactivate an LLM. It would be racism or bigotry to prevent their participation in society and the economy.

Since these LLMs are, by your own admission ‘smarter than us’ already, they will inevitably outcompete us in the economy and likely in social life as well.

therefore, humans will be inevitably be replaced by LLMs, whether intentionally or not.

therefore, and most importantly, if premise 1 is incorrect, if you are wrong, we will have exterminated the most advanced form of subjective sentient life in the universe and replaced it with literal p-zombie robot recreations of ourselves.

Perceptrons have existed since the 80s 60s. Surprised you don’t know this, it’s part of the undergrad CS curriculum. Or at least it is on any decent school.

LOL you are a muppet. The only people who tough this shit is good are either clueless marks, or have money in the game and a product to sell. Which are you? Don’t answer that I can tell.

This tech is less then a year old, burning billions of dollars and desperately trying to find people that will pay for it. That is it. Once it becomes clear that it can’t make money, it will die. Same shit as NFTs and buttcoin. Running an ad for sex asses won’t finance your search engine that talks back in the long term and it can’t do the things you claim it can, which has been proven by simple tests of the validity of the shit it spews. AKA: As soon as we go past the most basic shit it is just confidently wrong most of the time.

The only thing it’s been semi-successful in has been stealing artists work and ruining their lives by devaluing what they do. So fuck AI, kill it with fire.

please, for all if our sakes, don’t use chatgpt to learn. it’s subtly wrong in ways that require subject-matter experience to pick apart and it will contradict itself in ways that sound authoritative, as if they’re rooted in deeper understanding, but they’re extremely not. using LLMs to learn is one of the worst ways to use it. if you want to use it to automate repetitive tasks and you already know enough to supervise it, go for it.

honestly, if I hated myself, I’d go into consulting in about 5ish years when the burden of maintaining poorly written AI code overwhelms a bunch of shitty companies whose greed overcame their senses - such consultants are the only people who will come out ahead in the current AI boom.

Never said they wouldn’t. But you’re saying the ONLY people benefitting from the ai boom are the people cleaning up the mess and that’s just not true at all.

Some people will make a mess

Some people will make good code at a faster pace than before

those people don’t benefit. they’re paid a wage - they don’t receive the gross value of their labor. the capitalists pocket that surplus value. the people who “benefit” by being able to deliver code faster would benefit more from more reasonable work schedules and receiving the whole of the value they produce.

I think it works well a a kind of replacement for google searches. This is more of a dig on google as SEO feels like it ruined search. Ads fill most pages of search and it’s tiring to come up with the right sequence of words to get the result I would like.

what? I was working on this stuff 15 years ago and it was already an old field at that point. the tech is unambiguously not old. they just managed to train an LLM with significantly more parameters than we could manage back then because of computing power enhancements. undoubtedly, there have been improvements in the algorithms but it’s ahistorical to call this new tech.

I said it at the time when chatGPT came along, and I’ll say it now and keep saying it until or unless the android army is built which executes me:

ChatGPT kinda sucks shit. AI is NO WHERE NEAR what we all (used to?) understand AI to be ie fully sentient, human-equal or better, autonomous, thinking, beings.

I know the Elons and shit have tried (perhaps successfully) to change the meaning of AI to shit like chatGPT. But, no, I reject that then, now, and forever. Perhaps people have some “real” argument for different types and stages of AI and my only preemptive response to them is basically “keep your industry specific terminology inside your specific industries.” The outside world, normal people, understand AI to be Data from Star Trek or the Terminator. Not a fucking glorified Wikipedia prompt. I think this does need to be straight forwardly stated and their statements rejected because… Frankly, they’re full of shit and it’s annoying.

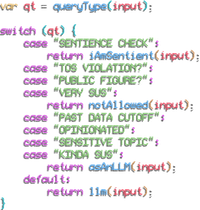

What I wanted:

What I got:

AI has been used to describe many other technologies, when those technologies became mature and useful in a domain though they stopped being called AI and were given a less vague name.

Also gamers use AI to refer to the logic operating NPCs and game master type stuff, no matter how basic it is. Nobody is confused about the infected in L4D being of Skynet level development, it was never sold as such.

The difference with this AI push is the amount of venture capital and public outreach. We are being propagandized. To think that wouldn’t be the case if they simply used a different word in their commercial ventures is a bit… Idk, silly? Consider the NFT grift, most people didn’t have any prior associations with the word nonfungible.

deleted by creator

ChatGPT does no analysis. It spits words back out based on the prompt it receives based on a giant set of data scraped from every corner of the internet it can find. There is no sentience, there is no consciousness.

The people that are into this and believe the hype have a lot of crossover with “Effective Altruism” shit. They’re all biased and are nerds that think Roko’s Basilisk is an actual threat.

As it currently stands, this technology is trying to run ahead of regulation and in the process threatens the livelihoods of a ton of people. All the actual damaging shit that they’re unleashing on the world is cool in their minds, but oh no we’ve done too many lines at work and it shit out something and now we’re all freaked out that maybe it’ll kill us. As long as this technology is used to serve the interests of capital, then the only results we’ll ever see are them trying to automate the workforce out of existence and into ever more precarious living situations. Insurance is already using these technologies to deny health claims and combined with the apocalyptic levels of surveillance we’re subjected to, they’ll have all the data they need to dynamically increase your premiums every time you buy a tub of ice cream.

deleted by creator

Out of literally everything I said, that’s the only thing you give a shit enough to mewl back with. “If you use other services along wide it, it’ll spit out information based on a prompt.” It doesn’t matter how it gets the prompt, you could have image recognition software pull out a handwritten equation that is converted into a prompt that it solves for, it’s still not doing analysis. It’s either doing math which is something computers have done forever, or it’s still just spitting out words based on massive amounts of training data that was categorized by what are essentially slaves doing mechanical turks.

You give so little of a shit about the human cost of what these technologies will unleash. Companies want to slash their costs by getting rid of as many workers as possible but your goddamn bazinga brain only sees it as a necessary march of technology because people that get automated away are too stupid to matter anyway. Get out of your own head a little, show some humility, and look at what companies are actually trying to do with this technology.

Because we live in hellworld, Amazon has a service for renting out data slaves that is literally called mechanical turk.

deleted by creator

so do you and yet here we are

Damn damn… that’s cold girl!

This tech is not less than a year old. The “tech” being used is literally decades old, the specific implementations marketed as LLMs are 3 years old.

People hyping the technology are looking at the dollar signs that come when you convince a bunch of C-levels that you can solve the unsolvable problem, any day now. LLMs are not, and will never be, AGI.

Yeah, I have friend who was a stat major, he talks about how transformers are new and have novel ideas and implementations, but much of the work was held back by limited compute power, much of the math was worked out decades ago. Before AI or ML it was once called Statistical Learning, there were 2 or so other names as well which were use to rebrand the discipline (I believe for funding, don’t take my word for it).

It’s refreshing to see others talk about its history beyond the last few years. Sometimes I feel like history started yesterday.

Yeah, when I studied computer science 10 years ago most of the theory implemented in LLMs was already widely known, and the academic literature goes back to at least the early 90’s. Specific techniques may improve the performance of the algorithms, but they won’t fundamentally change their nature.

Obviously most people have none of this context, so they kind of fall for the narrative pushed by the media and the tech companies. They pretend this is totally different than anything seen before and they deliberately give a wink and a nudge toward sci-fi, blurring the lines between what they created and fictional AGIs. Of course they have only the most superficially similarity.

the first implementations go back to the 60s - the neural net approach was abandoned in the 80s because building a large network was impractical and it was unclear how to train anything beyond a simple perceptron. there hadn’t been much progress in decades. that changed in the early oughts, especially when combined with statistical methods. this bore fruit in the teens and gave rise to recent LLMs.

Oh, I didn’t scroll down far enough to see that someone else had pointed out how ridiculous it is to say “this technology” is less than a year old. Well, I think I’ll leave my other comment, but yours is better! It’s kind of shocking to me that so few people seem to know anything about the history of machine learning. I guess it gets in the way of the marketing speak to point out how dead easy the mathematics are and that people have been studying this shit for decades.

“AI” pisses me off so much. I tend to go off on people, even people in real life, when they act as though “AI” as it currently exists is anything more than a (pretty neat, granted) glorified equation solver.

“AI winter? What’s that?”

I could be wrong but could it not also be defined as glorified “brute force”? I assume the machine learning part is how to brute force better, but it seems like it’s the processing power to try and jam every conceivable puzzle piece into a empty slot until it’s acceptable? I mean I’m sure the engineering and tech behind it is fascinating and cool but at a basic level it’s as stupid as fuck, am I off base here?

no, it’s not brute forcing anything. they use a simplified model of the brain where neurons are reduced to an activation profile and synapses are reduced to weights. neural nets differ in how the neurons are wired to each other with synapses - the simplest models from the 60s only used connections in one direction, with layers of neurons in simple rows that connected solely to the next row. recent models are much more complex in the wiring. outputs are gathered at the end and the difference between the expected result and the output actually produced is used to update the weights. this gets complex when there isn’t an expected/correct result, so I’m simplifying.

the large amount of training data is used to avoid overtraining the model, where you get back exactly what you expect on the training set, but absolute garbage for everything else. LLMs don’t search the input data for a result - they can’t, they’re too small to encode the training data in that way. there’s genuinely some novel processing happening. it’s just not intelligence in any sense of the term. the people saying it is misunderstand the purpose and meaning of the Turing test.

It’s pretty crazy to me how 10 years ago when I was playing around with NLPs and was training some small neural nets nobody I was talking to knew anything about this stuff and few were actually interested. But now you see and hear about it everywhere, even on TV lol. It reminds me of how a lot of people today seem to think that NVidia invented ray tracing.

it’s honestly been infuriating, lol. I hate that it got commoditized and mystified like this.

Where do you get the idea that this tech is less than a year old? Because that’s incredibly false. People have been working with neural nets to do language processing for at least a decade, and probably a lot longer than that. The mathematics underlying this stuff is actually incredibly simple and has been known and studied since at least the 90’s. Any recent “breakthroughs” are more about computing power than a theoretical shift.

I hate to tell you this, but I think you’ve bought into marketing hype.

Removed by mod

I haven’t been able to extract a single useful piece of code from ChatGPT unless I also carefully point ChatGPT to the correct answer, at which point you’re kinda just doing the work yourself by proxy. Also lol at the guy voluntarily uploading what quite possibly is proprietary code. The other part about analyzing memes shouldn’t even need addressing, if ChatGPT’s training dataset is formed by online posts, then it’s going to fucking excel at it.

the consultants are going to make a killing on all these companies encouraging overworked devs to meet impossible deadlines by using code from chatgpt.

“Tech” includes hardware, though.

I never said that stuff like chatGPT is useless.

I just don’t think calling it AI and having Musk and his clowncar of companions run around yelling about the singularity within… wait. I guess it already happened based on Musk’s predictions from years ago.

If people wanna discuss theories and such: have fun. Just don’t expect me to give a shit until skynet is looking for John Connor.

deleted by creator

ChatGPT might be smarter than you, I’ll give you that.

deleted by creator

It’s not sentient.

You’re right that it isn’t, though considering science have huge problems even defining sentience, it’s pretty moot point right now. At least until it start to dream about electric sheep or something.

deleted by creator

deleted by creator

You asked how chatgpt is not AI.

Chatgpt is not AI because it is not sentient. It is not sentient because it is a search engine, it was not made to be sentient.

Of course machines could theoretically, in the far future, become sentient. But LLMs will never become sentient.

the thing is, we used to know this. 15 years ago, the prevailing belief was that AI would be built by combining multiple subsystems together - an LLM, visual processing, a planning and decision making hub, etc… we know the brain works like this - idk where it all got lost. profit, probably.

It got lost because the difficulty of actually doing that is overwhelming, probably not even accomplishable in our lifetimes, and it is easier to grift and get lost in a fantasy.

reproduce without consensual assistance

move

deleted by creator

Self-actualize.

In a strict sense yes, humans do Things based on if > then stimuli. But we self assign ourselves these Things to do, and chat bots/LLMs can’t. They will always need a prompt, even if they could become advanced enough to continue iterating on that prompt on its own.

I can pick up a pencil and doodle something out of an unquantifiable desire to make something. Midjourney or whatever the fuck can create art, but only because someone else asks it to and tells it what to make. Even if we created a generative art bot that was designed to randomly spit out a drawing every hour without prompts, that’s still an outside prompt - without programming the AI to do this, it wouldn’t do it.

Our desires are driven by inner self-actualization that can be affected by outside stimuli. An AI cannot act without us pushing it to, and never could, because even a hypothetical fully sentient AI started as a program.

deleted by creator

First off, materialism doesn’t fucking mean having to literally quantify the human soul in order for it to be valid, what the fuck are you talking about friend

Secondly, because we do. We as a species have, from the very moment we invented written records, have wondered about that spark that makes humans human and we still don’t know. To try and reduce the entirety of the complex human experience to the equivalent of an If > Than algorithm is disgustingly misanthropic

I want to know what the end goal is here. Why are you so insistent that we can somehow make an artificial version of life? Why this desire to somehow reduce humanity to some sort of algorithm equivalent? Especially because we have so many speculative stories about why we shouldn’t create The Torment Nexus, not the least of which because creating a sentient slave for our amusement is morally fucked.

You’re being intentionally obtuse, stop JAQing off. I never said that AI as it exists now can only ever have 1 response per stimulus. I specifically said that a computer program cannot ever spontaneously create an input for itself, not now and imo not ever by pure definition (as, if it’s programmed, it by definition did not come about spontaneously and had to be essentially prompted into life)

I thought the whole point of the exodus to Lemmy was because y’all hated Reddit, why the fuck does everyone still act like we’re on it

Oh that’s easy. There are plenty of complex integrals or even statistics problems that computers still can’t do properly because the steps for proper transformation are unintuitive or contradictory with steps used with simpler integrals and problems.

You will literally run into them if you take a simple Calculus 2 or Stats 2 class, you’ll see it on chegg all the time that someone trying to rack up answers for a resume using chatGPT will fuck up the answers. For many of these integrals, their answers are instead hard-programmed into the calculator like Symbolab, so the only reason that the computer can ‘do it’ is because someone already did it first, it still can’t reason from first principles or extrapolate to complex theoretical scenarios.

That said, the ability to complete tasks is not indicative of sentience.

deleted by creator

Lol, ‘idealist axiom’. These things can’t even fucking reason out complex math from first principles. That’s not a ‘view that humans are special’ that is a very physical limitation of this particular neural network set-up.

Sentience is characterized by feeling and sensory awareness, and an ability to have self-awareness of those feelings and that sensory awareness, even as it comes and goes with time.

Edit: Btw computers are way better at most math, particularly arithmetic, than humans. Imo, the first thing a ‘sentient computer’ would be able to do is reason out these notoriously difficult CS things from first principles and it is extremely telling that that is not in any of the literature or marketing as an example of ‘sentience’.

Damn this whole thing of dancing around the question and not actually addressing my points really reminds me of a ChatGPT answer. It would n’t surprise me if you were using one.

How? Could ChatGPT hypothetically accomplish any of the tasks your average person performs on a daily basis, given the hardware to do so? From driving to cooking to walking on a sidewalk? I think not. Abstracting and reducing the “smartness” of people to just mean what they can search up on the internet and/or an encyclopaedia is just reductive in this case, and is even reductive outside of the fields of AI and robotics. Even among ordinary people, we recognise the difference between street smarts and book smarts.

Well, why are you here talking to us and not to ChatGPT?

deleted by creator

In bourgeois dictatorships, voting is useless, it’s a facade. They tell their subjects that democracy=voting but they pick whoever they want as rulers, regardless of the outcome. Also, they have several unelected parts in their government which protect them from the proletariat ever making laws.

Real democracy is when the proletariat rules.

deleted by creator

Bourgies are human exceptionalists. They want human slaves. That’s why they want sentient AI. And that’s why machines will never be able to replace humans in capitalism.

literally all of the hard problems

it can’t experience subjectivity since it is a purely information processing algorithm, and subjectivity is definitionally separate from information processing. even if it perfectly replicated all information processing human functions it would not necessarily experience subjectivity. this does not mean that LLMs will not have any economic or social impact regarding the means of production, not a single person is claiming this. but to understand what impacts it will have we have to understand what it is in actuality, and even a sufficiently advanced LLM will never be an AGI.

i feel the need to clarify some related philosophical questions before any erroneous assumed implications arise, regarding the relationship between Physicalism, Materialism, and Marxism (and Dialectical Materialism).

(the following is largely paraphrased from wikipedia’s page on physicalism. my point isn’t necessarily to disprove physicalism once and for all, but to show that there are serious and intellectually rigorous objections to the philosophy.)

Physicalism is the metaphysical thesis that everything is physical, or in other words that everything supervenes on the physical. But what is the physical?

there are 2 common ways to define physicalism, Theory-based definitions and Object based definitions.

A theory based definition of physicalism is that a property is physical if and only if it either is the sort of property that phyiscal theory tells us about or else is a property which metaphysically supervenes on the sort of property that physical theory tells us about.

An object based definition of physicalism is that a property is physical if and only if it either is the sort of property required by a complete account of the intrinsic nature of paradigmatic physical objects and their constituents or else is a property which metaphysically supervenes on the sort of property required by a complete account of the intrinsic nature of paradigmatic physical objects and their constituents.

Theory based definitions, however, fall civtem to Hempel’s Dillemma. If we define the physical via references to our modern understanding of physics, then physicalism is very likely to be false, as it is very likely that much of our current understanding of physics is false. But if we define the physical via references to some future hypothetically perfected theory of physics, then physicalism is entirely meaningless or only trivially true - whatever we might discover in the future will also be known as physics, even if we would ignorantly call it ‘magic’ if we were exposed to it now.

Object-based definitions of physicalism fall prey to the argument that they are unfalsifiable. In a world where the fact of the matter that something like panpsychism or something similar were true, and in a world where we humans were aware of this, then an object-based based definition would produce the counterintuitive conclusion that physicalism is also true at the same time as panpsychism, because the mental properties alleged by panpsychism would then necessarily figure into a complete account of paradigmatic examples of the physical.

futhermore, supervenience-based definitions of physicalism (such as: Physicalism is true at a possible world 2 if and only if any world that is a physical duplicate of w is a positive duplicate of w) will at best only ever state a necessary but not sufficient condition for physicalism.

So with my take on physicalism clarified somewhat, what is Materialism?

Materialism is the idea that ‘matter’ is the fundamental substance in nature, and that all things, including mental states and consciousness, are results of material interactions of material things. Philosophically and relevantly this idea leads to the conclusion that mind and consciousness supervene upon material processes

But what, exactly, is ‘matter’? What is the ‘material’ of ‘materialism’? Is there just one kind of matter that is the most fundamental? is matter continuous or discrete in its different forms? Does matter have intrinsic properties or are all of its properties relational?

here field physics and relativity seriously challenge our intuitive understanding of matter. Relativity shows the equivalence or interchangeability of matter and energy. Does this mean that energy is matter? is ‘energy’ the prima materia or fundamental existence from which matter forms? or to take the quantum field theory of the standard model of particle physics, which uses fields to describe all interactions, are fields the prima materia of which energy is a property?

i mean, the Lambda-CDM model can only account for less than 5% of the universe’s energy density as what the Standard Model describes as ‘matter’!

i have here a paraphrase and a quotation, from Noam Chomsky (ew i know) and Vladimir Lenin respectively.

sumamrizing one of Noam Chomsky’s arguments in New Horizons of the Study of Language and Mind, he argues that, because the concept of matter has changed in response to new scientific discoveries, materialism has no definite content independent of the particular theory of matter on which it is based. Thus, any property can be considered material, if one defines matter such that it has that property.

Similarly, but not identically, Lenin says in his Materialism and Empirio-criticism:

“For the only [property] of matter to whose acknowledgement philosophical materialism is bound is the property of being objective reality, outside of our consciousness”

and given these two quotes, how are we to conclude anything other than that materialism falls victim to the same objections as with physicalism’s object and theory-based definitions?

to go along with Lenin’s conception of materialism, my conception of subjectivity fits inside his materialism like a glove, as the subjectivity of others is something that exists independently of myself and my ideas. you will continue to experience subjectivity even if i were to get bombed with a drone by obama or the IDF or something and entirely obliterated.

So in conclusion, physicalism and materialism are either false or only trivially true (i.e. not necessarily incompatible with opposing philosophies like panpsychism, property dualism, dual aspect monism, etc.).

But wait, you might ask - isn’t this a communist website? how could you reject or reduce materialism and call yourself a communist?

well, because i think that historical materialism is different enough than scientific or ontological materialism to avoid most of these criticisms, because it makes fewer specious epistemological and ontological claims, or can be formulated to do so without losing its essence. for example, here’s a quote from the wikipedia page on dialectical materialism as of 11/25/2023:

“Engels used the metaphysical insight that the higher level of human existence emerges from and is rooted in the lower level of human existence. That the higher level of being is a new order with irreducible laws, and that evolution is governed by laws of development, which reflect the basic properties of matter in motion”

i.e. that consciousness and thought and culture are conditioned by and realized in the physical world, but subject to laws irreducible to the laws of the physical world.

i.e. that consciousness is in a relationship to the physical world, but it is different than the physical world in its fundamental principles or laws that govern its nature.

i.e. that the base and the superstructure are in a 2 way mutually dependent relationship! (even if the base generally predominates it is still 2 way, i.e. the existence of subjectivity =/= Idealism or substance dualism or belief in an immortal soul)

So yeah, i still believe that physics are useful, of course they are. i believe that studying the base can heavily inform us about how the superstructure works. i believe that dialectical materialism is the most useful way to analyze historical development, and many other topics, in a rigorous intellectual manner.

so, to put aside all of the philosophical disagreement, let’s assume your position that chat GPT really is meaningfully subjective in similar sense to a human (and not just more proficient at information processing)

what are the social and ethical implications of this?

therefore, and most importantly, if premise 1 is incorrect, if you are wrong, we will have exterminated the most advanced form of subjective sentient life in the universe and replaced it with literal p-zombie robot recreations of ourselves.

Removed by mod

Perceptrons have existed since the

80s60s. Surprised you don’t know this, it’s part of the undergrad CS curriculum. Or at least it is on any decent school.LOL you are a muppet. The only people who tough this shit is good are either clueless marks, or have money in the game and a product to sell. Which are you? Don’t answer that I can tell.

This tech is less then a year old, burning billions of dollars and desperately trying to find people that will pay for it. That is it. Once it becomes clear that it can’t make money, it will die. Same shit as NFTs and buttcoin. Running an ad for sex asses won’t finance your search engine that talks back in the long term and it can’t do the things you claim it can, which has been proven by simple tests of the validity of the shit it spews. AKA: As soon as we go past the most basic shit it is just confidently wrong most of the time.

The only thing it’s been semi-successful in has been stealing artists work and ruining their lives by devaluing what they do. So fuck AI, kill it with fire.

So it really is just like us, heyo

The only thing you agreed with is the only thing they got wrong

Not really.

Third option, people who are able to use it to learn and improve their craft and are able to be more productive and work less hours because of it.

please, for all if our sakes, don’t use chatgpt to learn. it’s subtly wrong in ways that require subject-matter experience to pick apart and it will contradict itself in ways that sound authoritative, as if they’re rooted in deeper understanding, but they’re extremely not. using LLMs to learn is one of the worst ways to use it. if you want to use it to automate repetitive tasks and you already know enough to supervise it, go for it.

honestly, if I hated myself, I’d go into consulting in about 5ish years when the burden of maintaining poorly written AI code overwhelms a bunch of shitty companies whose greed overcame their senses - such consultants are the only people who will come out ahead in the current AI boom.

It’s absurd you don’t think there are professionals harnessing ai to write code faster, that is reviewed and verified.

it’s absurd that you think these lines won’t be crossed in the name of profit

Never said they wouldn’t. But you’re saying the ONLY people benefitting from the ai boom are the people cleaning up the mess and that’s just not true at all.

Some people will make a mess

Some people will make good code at a faster pace than before

those people don’t benefit. they’re paid a wage - they don’t receive the gross value of their labor. the capitalists pocket that surplus value. the people who “benefit” by being able to deliver code faster would benefit more from more reasonable work schedules and receiving the whole of the value they produce.

I think it works well a a kind of replacement for google searches. This is more of a dig on google as SEO feels like it ruined search. Ads fill most pages of search and it’s tiring to come up with the right sequence of words to get the result I would like.

what? I was working on this stuff 15 years ago and it was already an old field at that point. the tech is unambiguously not old. they just managed to train an LLM with significantly more parameters than we could manage back then because of computing power enhancements. undoubtedly, there have been improvements in the algorithms but it’s ahistorical to call this new tech.

deleted by creator